Find quietude. Observe whatever is around you. If it seems banal, discover it to be fascinating and mysterious. Ignore distractions, otherwise known as ‘everybody else’. Ask simple questions that puzzle you. Be patient in pondering them.

That is how I imagine Gregor Mendel might answer us today if we asked him: How — I mean how! — did you achieve your stunning intellectual breakthroughs, on which we today base our understanding of biology?

Put differently: Let’s pretend that Gregor Mendel were alive today instead of in the 19th century, and that he were not an Augustinian monk in the former Austrian Empire but a wired and connected, über-productive modern man with an iPhone, a Twitter account, cable television, a job with bosses who email him on the weekend, etc etc.

Would this modern Mendel be able to achieve his own breakthrough in those circumstances?

So far in my rather long-running thread about the greatest thinkers in history, I’ve featured mostly philosophers and historians, with the odd scientist and even one yogi. But it occurred to me that Mendel belongs into that pantheon — not only for his thought but also for his thinking. I think he offers us a timely life-style lesson, an insight that fits the Zeitgeist of our hectic age.

So: First, a brief recap of his breakthrough. Then my interpretation how his life style and thought process made that breakthrough possible (and why ours might make such breakthroughs harder).

1) Mendelian genetics

Mendel was an Augustinian monk in what used to the Austrian Empire (and what is now the Czech Republic). He had an open and inquisitive mind and, as a monk, wasn’t all that busy, so he had plenty of spare time. He liked to breed bees. Then he began breeding peas. That’s right. Peas.

Peas intrigued him. (Would they intrigue you? What else does not intrigue you?) He found peas interesting because they had flowers that were either white or purple and never anything else. (Would you find that interesting?)

Mendel contemplated what peas could therefore teach him about how parents pass on traits to their offspring, ie what we would call genetics.

At the time, conventional wisdom held that the traits of parents are somehow mixed in their children. If parents were paint buckets, say, then a yellow dad and a blue mom would make a green baby bucket, and so on. (It’s interesting that nobody spotted how implausible this was: After several generations every bucket, ie every living thing, would have to end up mud-brown. Every creature would look the same. Instead, nature is constantly getting more colorfol, more diverse, with more and stranger new species.)

So Mendel, in the late 1850s and early 1860s, started playing with his peas. Pea plants fertilize themselves, so Mendel cut off the stamens of some so that they could no longer do that. Then he used a little brush and fertilized the castrated pea plant with pollen from some other pea plant. He thereby had total control over who was dad and who was mom.

He was now able to cross-breed the peas with purple flowers and the peas with white flowers. So he did. Then he waited.

Surprise #1:

Already in the next generation, Mendel could rule out the prevailing “paint-bucket-mixing” theory. No baby pea plants had lighter purple (or striped or dotted) flowers. Instead they all had purple flowers.

So he took those new purple-flowered pea plants and cross-bred them again. And again, he waited.

Surprise #2:

In the next generation, most pea plants again had purple flowers. But some now had white flowers. Wow! How did that happen?

Moreover, the ratio in this generation between purple and white flowers was exactly 3:1. Hmm.

Mendel kept doing these experiments, and kept thinking, and then inferred the simple but shocking conclusion:

- Each parent had to be contributing its version of a given trait (white vs purple, say) to the offspring.

- Each baby thus had to have both versions of every trait, but showed in its own appearance only one version, which had to be dominant.

- The other (“recessive“) version, however, did not go away, and when these pea plants had sex again, they shuffled the two versions and randomly passed one on to their offspring (with the other coming from the other parent), so that their baby again had two versions.

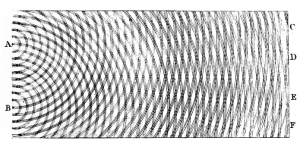

This looks as follows:

In the second generation, every pea plant has a purple (red, in this picture) and a white version, one from each parent, but since the purple is dominant, every flower looks purple.

In the next generation,

- one fourth will have a purple from dad and a purple from mom (and look purple),

- one fourth will have a purple from dad and a white from mom (and still look purple),

- one fourth will have a white from dad and a purple from mom (and still look purple), and

- one fourth will have a white from dad and a white from mom (and look white).

The rest, you might say, is history. With all our amazing breakthroughs in biology in the 20th century, we merely elaborated on his insights, in the process explaining the mechanism of evolution (Darwin, coming up with that idea at the same exact time, had no knowledge of Mendel’s breakthrough.)

In today’s language, Mendel

- showed the difference between genotype and phenotype. (Your genotype might be white/purple, for example, but your phenotype would be purple.)

- understood the basic idea of meiosis (the division of a cell into two haploid gametes — a sperm cell or egg with half of the mother cell’s chromosomes, randomly chosen),

- described how two gametes then merge sexually to form a diploid zygote (ie, a cell with all chromosome paired up again, one member of each pair coming from each parent),

- explained how some versions of the gene pairs, called alleles (such as purple or white), are expressed and some not, even as those not expressed can re-emerge in the phenotype in the next generation.

DNA, RNA, ribosomes and all that were merely detail.

2) How was it possible?

Let’s make ourselves aware, first, of what it must have been like for Mendel during these years (this is purely conjecture):

- He got up.

- He prayed.

- Had breakfast.

- Went into the garden.

- Looked at the pea flowers for a long time.

- Watered them.

- Took a break.

- Watched the peas some more.

- Thought about them.

- Dozed off for a nap.

- Woke up and had an idea, still inchoate in his mind.

- Went to bed.

- Thought about it some more….

You get the idea. Not exactly stressful. Few interruptions. Lots of waiting (how long is one generation of peas anyway?).

He was, we would say, switched off. He was not multi-tasking, he did not have adrenaline coursing through his veins as he answered a text message while watching a video stream while writing a Powerpoint …

Compare his time with his pea plants to Einstein‘s time at the Bern patent office, where he was utterly underemployed and could easily have been bored, but instead did thought experiments and had his “miracle year”.

Or compare it to Isaac Newton‘s time after had to leave the action of Cambridge (because plague broke out) and returned to the isolation of his family farm with nothing to do except watch apples drop from trees….

Or compare it to the time when Gautama Siddhartha (aka the Buddha) withdrew from all action and sat, just sat, under a tree, with the birds pooping on his head until there was a pile of guano on his hair, with his flesh melting from his bones because he was too deep in concentration to eat…..

Lesson #1:

Good stuff can happen during downtime (even if you didn’t volunteer for it).

Corollary: Can good stuff happen during uptime? You may have to take time out to be creative.

Lesson #2:

Be amazed.

Corollary: Don’t assume the things and people in your daily life are boring.

Lesson #3:

Turn the devices off.

Corollary: Distraction not only kills people, it also kills thought.

Lesson #4:

Be patient.

Corollary: You can’t breed peas in internet time. Nor novels, scripts, songs, paintings…

Lesson #5:

Look for the simple.

Corollary: The more bewildering the complexity observed, the simpler the solution.

(See also: Gordian knot.)

Lesson #6:

It doesn’t have to be complete to be original.

Corollary: It took us a century to explain the process Mendel grasped; an idea is good even if it “merely” starts something.

(See also: Incompleteness theorem. Mr Crotchety’s favorite — need I say more?)

Lesson #7:

Don’t expect the world to get it right away.

Corollary: If it took us a century to understand Mendel’s breakthrough, we might take a while even for yours. 😉

You might recall that

You might recall that

1) What is sleep?

1) What is sleep?

What is money?

What is money?